An ocean explorer, a filmmaker and an AI pioneer with something unexpected in common

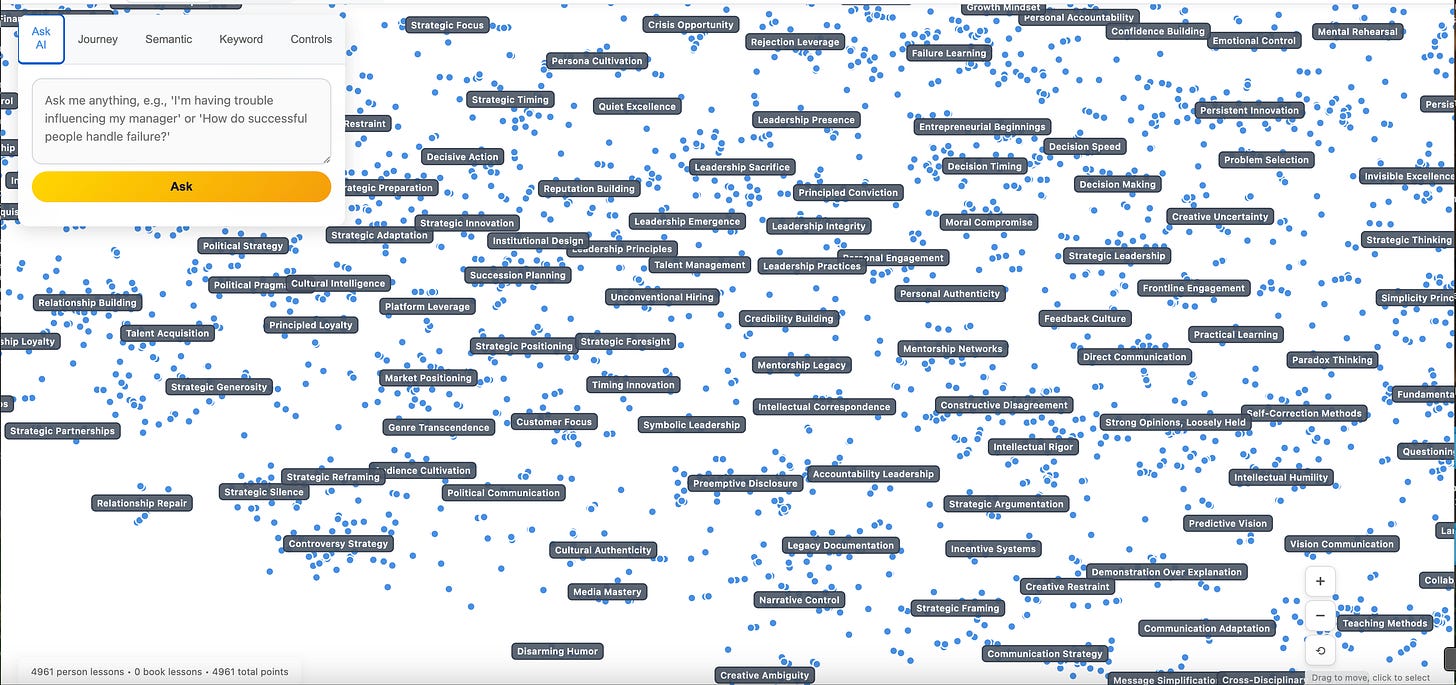

Over the past six months I built a tool that maps 5,017 lessons from 396 remarkable people -- scientists, athletes, founders, philosophers, artists, everyone from Taylor Swift to Winston Churchill. When I first tried this in 2023, AI wasn’t good enough to make it interesting. It is now.

This is what it looks like:

It’s a map of what it takes to be successful.

On this Substack I’ll be sharing outcomes from the system and what I’m learning by building it. And as the very first cluster I’ve shared publicly, this one is pretty fitting.

Jacques Cousteau couldn’t explore the ocean because diving technology limited humans to minutes underwater. So in 1943, he partnered with an engineer named Émile Gagnan to invent the Aqua-Lung. Gagnan’s regulator was originally designed for cars running on cooking gas during wartime fuel rationing. Cousteau looked at it and saw the ocean floor. That invention created a $4 billion recreational diving industry and made possible every underwater documentary you’ve ever watched. Without the tool, the exploration couldn’t happen.

Forty years later, James Cameron faced the same problem in a completely different domain: No existing camera system could capture what he envisioned for Avatar. So he co-invented the Fusion Camera System and Simulcam technology, spending millions of dollars on the project. The system became the industry standard for 3D filmmaking. The tool outlived the project.

And decades before either of them, Marvin Minsky, one of the founders of artificial intelligence, was building programming languages, inventing the confocal scanning microscope, and designing theoretical frameworks, all because existing tools constrained what he could think.

The lesson is this: Your imagination can reach much further than the tooling, but you’d be surprised how quickly the tooling can catch up.

My AI didn’t know this cluster would emerge. I didn’t design it -- I loaded thousands of lessons into a semantic space and asked: What connects? The math of semantic embeddings found this pattern on its own. There are thousands more like it, and literally countless lessons now hiding in plain sight.

If this catches your interest, I’m looking for a small number of pre-release testers -- just reply to this post, or send me a DM.